1. Joint Probability Function

Joint probability can be classified with discrete random variables and continuous random variables. In this post I want deal with just discrete random variables and its joint probability function.

Let Y_1, Y_2 be discrete random variables. The joint probability distribution for [latex] Y_1, Y_2 is given by

p(y_1, y_2)=P(Y_1=y_1, Y_2=y_2), \,\,\, -\infty< y_1 < \infty, -\infty< y_2 < \infty

The function p(y_1, y_2) will be referred to as the joint probability function. [referred by Mathematical Statistics with Applications]

The below can be satisfied.

- 1. p(y_1, y_2) \geq 0 \,\,\, for \,all y_1, y_2

- 2. \sum_{y_1, y_2} p(y_1, y_2) = 1

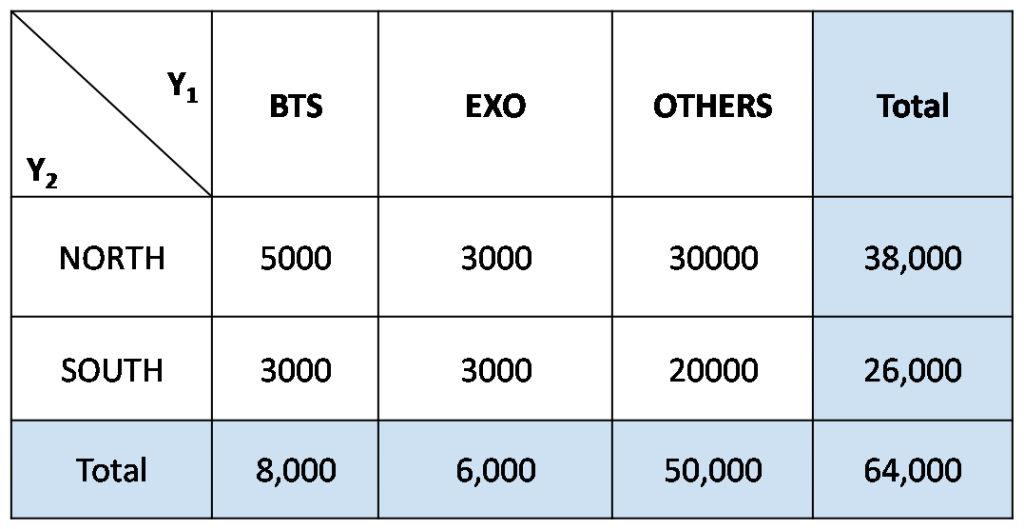

Let's see an example. Let the total probability space be S = {middle school 3rd grade students in Seoul} and Y1 represents favorite idols while Y2 represent the place where they are live. Y1 has three elements {'BTS', 'EXO', OTHERS', Y2 has two elements {'NORTH', 'SOUTH'}. Then we can assign some joint probabilities for each outcomes (Y1, Y2).

You can answer the below questions.

- P(Y_1='BTS') =?

- P(Y_2='SOUTH') =?

- P(Y_1='BTS', Y_2='SOUTH') =?

The answer of the last question is 3 / 64. Please examine that.

2. Chain Rule

To induce the result by using the chain rule, joint probability function can be thought as an consecutive trials.

P(x, y) = P(x \, and \, y)we can think about that y followed by x or vise versa.

I would not introduce the concept of Joint Probability Density Function here. Rather than that I want to explain the chain rules by using joint probability considered as 'consecutive events'.

We can describe the consecutive events like below.

P(x \, and \, y) = P(x) \cdot P(y|x)i.e. probability \, of \, x \rightarrow y \, : \, P(x) \cdot P(y|x)

How about in the case of x \rightarrow y \rightarrow z ?

It can be decomposed into two steps.

1. x \rightarrow y, z

2. x, y \rightarrow z

At the first step,

probability \, of \, x \rightarrow y,z \, : \, P(x) \cdot P(y,z|x)At the second step, we derive the equation carefully given x,

probability \, of \, x,y \rightarrow z \, : \, P(x) \cdot P(z|x,y) \cdot P(y|x)Like above, we can derive the joint probabilities of n events : x_1, \, x_2, \cdots , x_n

P(x_1,x_2, \cdots , x_n) = P(x_n|x_{n-1}, \cdots, x_1) \cdots P(x_2|x_1) \cdot P(x_1)